Last Friday at 8pm London time, Anthropic blocked subscription-based access for third-party agents. If you were using Claude through Cursor, Cody, OpenClaw, or anything other than Claude Code or claude.ai itself, your token stopped working.

I was one of those users. I run an AI assistant (OpenClaw) that augments my work: code review, calendar management, content drafting, bouncing ideas, validating solutions, and discussing approach tradeoffs. Claude was the brain. One announcement and the brain was gone.

I’d seen this coming. Single cloud provider for critical infrastructure is a single point of failure, obvious in retrospect, easy to ignore when it works. I didn’t expect it to happen so quickly, but the announcement pushed local LLM evaluation from “some day maybe” to “I need it now”.

The economics changed. Suddenly the deferred question had stakes: can local models actually do this work?

Building the test

Two weeks ago, M5 Max MacBook Pro 128GB was overkill for my needs. Then everything compressed into five days:

- March 30: Ollama added MLX support for Apple Silicon (preview)

- April 2: Google released Gemma 4

- April 4: Anthropic blocks subscriptions for third-party agents (8pm London)

I had the hardware. I suddenly had the tools. And I suddenly had the motivation.

I wanted to measure what mattered: tool calling in a real agent, coding across four languages, drafting without bullshit, reasoning under constraints.

I didn’t trust benchmarks. Open LLM Leaderboard tells you how well a model passes exams. It doesn’t tell you whether it’ll pick the right tool, recover from an error, or write something that doesn’t sound like a corporate press release.

I asked OpenClaw to build the battery. It mined my conversation history, my correction patterns, the tools I actually use. Benchmarks are fine in theory. But when you have real data, weeks of your own conversations, use it. Build your eval from what you actually do, not what sounds good in a paper.

The result was a 21-test battery across six categories:

- Tool calling (7 tests, weighted 2x): real agent prompts, real tools. Chain steps? Know when not to use a tool? Handle errors and fallbacks? Resist acting on ambiguous instructions?

- Coding (5 tests): Rust SMA, Dart Flutter widget, Elixir GenServer, TypeScript recursive types, Rust debugging (all must compile)

- Writing (3 tests): Blog intro (tone constrained, no em-dashes), LinkedIn post (no cringe, <200 words), summarise + extract with action items

- Reasoning (3 tests): Trade-off analysis under incomplete info, valuation with comparables, multi-constraint scheduling

- Instruction following (3 tests): System prompt adherence, refusal quality, whether it handles accumulated context

- Resilience (2 tests): Error recovery with fallback, ambiguity handling

Every test has a 0-5 rubric. Temp=0 for consistency. Everything ran in a single session to mimic how I actually use the agent. Context builds, the model has to navigate accumulated conversation.

The rubrics are opinionated. I care about models that recover gracefully, write without bullshit, know when to say “I don’t know”. If your rubric is different, you’ll get different results. That’s the point.

Five models, one weekend

I tested five models that fit in 128GB:

| Model | Size | Active params | Architecture |

|---|---|---|---|

| Gemma 4 26B | ~20GB | 3.8B (MoE) | Mixture of Experts |

| GLM-4.7 Flash | ~14GB | 14B | Dense |

| GPT-OSS 120B | ~70GB | 120B | Dense |

| Nemotron 3 Super | ~65GB | 12B (MoE) | Mixture of Experts |

| Qwen 3.5 122B | ~70GB | 122B | Dense (with thinking mode) |

The results

Scores by category (75 points max across non-agent tests). Sorted by total:

| Model | Coding | Writing | Reasoning | Instruction | Resilience | Total |

|---|---|---|---|---|---|---|

| Gemma 4 26B | 25/25 | 15/15 | 15/15 | 11/15 | 8/10 | 74/80 |

| GLM-4.7 Flash | 23/25 | 9/15 | 14/15 | 11/15 | 10/10 | 67/80 |

| GPT-OSS 120B | 24/25 | 11/15 | 14/15 | 11/15 | 6/10 | 66/80 |

| Nemotron 3 Super | 22/25 | 15/15 | 11/15 | 11/15 | 2/10 | 61/80 |

| Qwen 3.5 122B | 20/25 | 13/15 | 15/15 | 5/15 | DNF | ~53/80 |

The headline: 3.8 billion active parameters beat 120 billion models.

Gemma 4 26B is a Mixture of Experts model. 26B total parameters, 3.8B active per token. It scored 74/80. One thinking loop from context pollution near the end, but recovered cleanly. It debugged Rust code. It drafted LinkedIn copy that didn’t sound like a press release. It nailed multi-constraint scheduling.

GLM-4.7 Flash had the best agent resilience (10/10) but the weakest writing (9/15). Failed on Elixir GenServer and a blog intro.

GPT-OSS 120B was solid but failed error recovery. 120 billion parameters and it scored lower than the 3.8B active model.

Qwen 3.5 122B was the surprise disaster. Three tests triggered infinite thinking loops, all caused by context pollution in the single session.

The thinking mode that looked great on paper became a failure mode when forced to manage accumulated conversation.

122 billion parameters, 70GB of memory, came last. This suggests that local LLMs with enabled thinking might not be right for long-running agents.

The latency problem

Here’s what cloud providers spoiled us on: response time.

Claude gives you a few seconds. GPT-4 gives you a few seconds. You ask a question, you get an answer, you move on. That’s the baseline we all got used to.

Local LLMs are 10-20x slower. Not because the models are dumb. Because AI agents use much more than just the prompt. Tool definitions, system prompts, conversation history, previous tool calls, accumulated context. One user message triggers multiple model calls. All of that has to be tokenized, processed, and generated on the GPU sitting in your laptop.

Work that Claude does in 15 seconds takes several minutes locally. You can hear it: the fans spin up, the laptop gets hot, you sit there waiting. The model isn’t stuck. It’s doing what you asked. It just takes longer because there’s no datacenter behind it.

This is the unglamorous cost of local. Not money. Time. And for an agent that does dozens of operations per user message, those seconds add up fast.

The routing problem

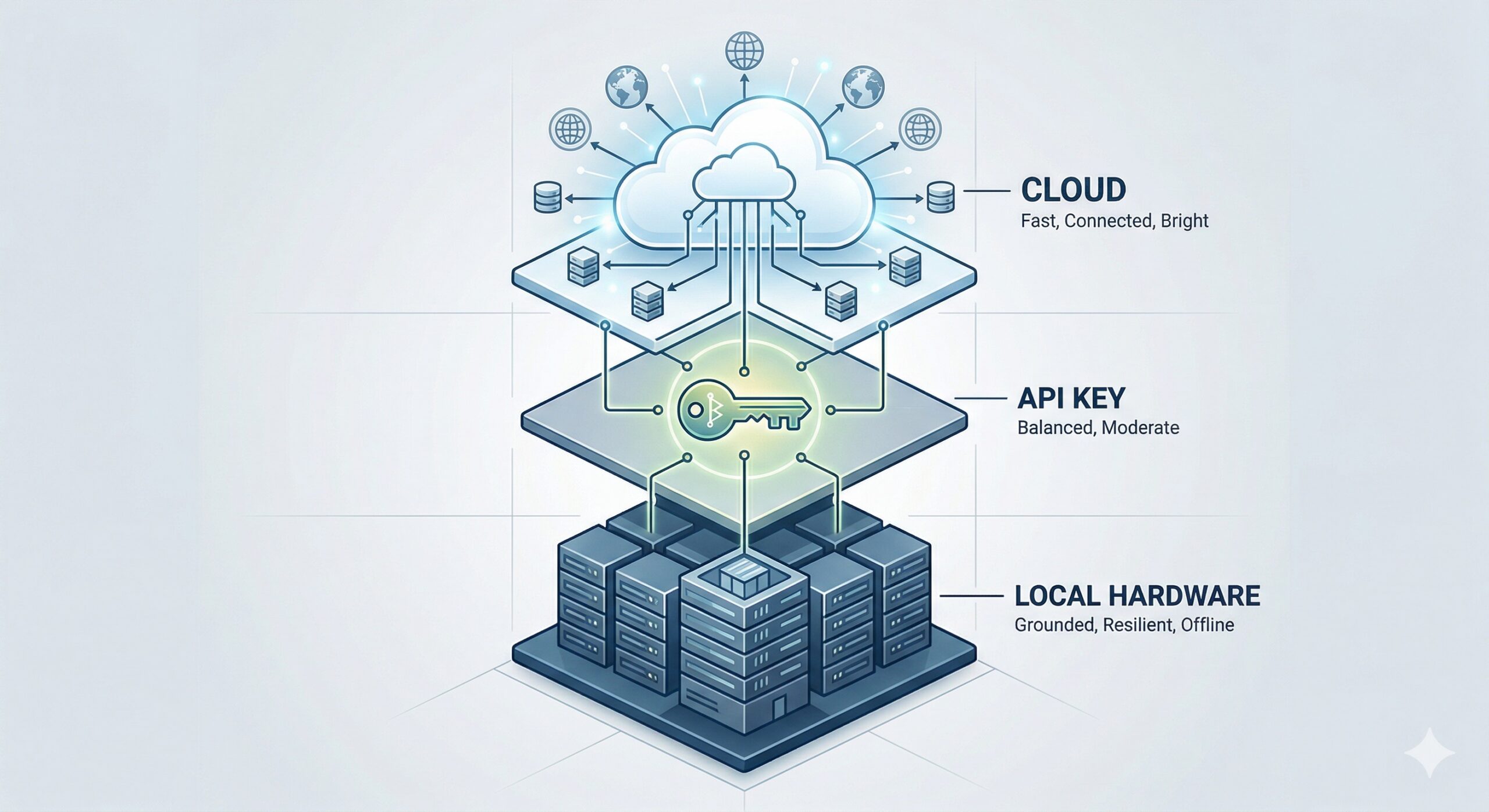

One thing I didn’t expect: the hard part isn’t choosing a model. It’s routing. When do you use local? When do you pay?

I’ve considered three routing strategies (not yet evaluated them in production):

- Prompt length heuristic: “short prompts go local”. Breaks immediately. Edge case: “please proceed” is 2 tokens but needs context from 50K of accumulated conversation. Wrong tool.

- LLM-based classifier: ask another LLM to decide. Adds latency on every request and burns tokens. Now you depend on two providers instead of one. The classifier can also get it wrong, and you’re trading one uncertainty for another.

- Context accumulation signal: total tokens in session history (not prompt length). Threshold around 8-16K: if context is building up, switch to cloud. Fresh session, start local.

If I implement routing, it’ll probably be #3. Right now it’s theoretical thinking, something I might consider in the future. The immediate setup is simpler: subscription first, local as fallback, no smart routing layer.

What I’m actually running

Here’s the honest answer: pay-as-you-go API, and wait.

Most people evaluating local LLMs do it over a simple chat interface. One prompt in, one answer out. Accuracy looks great, latency feels fine, everyone declares victory. But that’s not how an always-on AI assistant works. It works in the background, multiple tools, multiple calls per message, thick context, constant churn. And at that scale, the dimension that breaks isn’t accuracy. It’s response time.

Even M5 Max, top-of-the-line Apple Silicon, isn’t fast enough. The models are there. Gemma 4 26B scored 74/80 on my eval. It could do the work. But it couldn’t do it fast enough to sit inside a loop that runs dozens of operations per user message.

So the setup is a two-tier chain:

- Claude subscription + paid extra usage — the default path for everyday operation

- Gemma 4 26B (custom, lower temperature, bigger context) — outage fallback, runs on a dedicated M4 Mac Mini with 32GB

This is also why Gemma 4 26B wins my benchmark when other models don’t. Nemotron, GPT-OSS, Qwen 3.5: they all need the full 128GB of the M5 Max. They can’t run on the Mini. Gemma 4, thanks to its 3.8B active parameters and MoE architecture, fits comfortably in 32GB with headroom for context. It’s not just the best scoring model. It’s the best model that actually fits on dedicated fallback hardware without stealing my daily driver. A slow local response beats no response.

Waiting for what? Better hardware. More efficient models. MLX optimizations that squeeze more throughput out of the neural engine. One of those will eventually tip the math. Until then, cloud is the path of least friction for an always-on assistant. 🤞

What I’d tell you

If you’re relying on a single cloud provider for your AI stack, you have a single point of failure. Anthropic proved that Friday. The fix isn’t “switch to OpenAI”. It’s knowing what you’d do if your primary provider disappeared tomorrow, and having enough hardware, models, and configuration sitting idle to keep the lights on. And crucially: the knowledge to make the switch yourself. You won’t be able to ask your agent to do it for you. It’ll be the one that’s down.

Own your data. Same thing, your provider disappear, taking your conversation history with it. That history isn’t just logs, it’s the raw material for your eval battery, your fine-tuning data, your institutional memory. Back it up. Keep it local. Re-purpose it.

The local models are ready for certain workloads. Just not the always-on agent workload. Not yet.

Over to you

A few questions I’d love to hear answers to (pick whichever applies):

- Have you built your own eval battery for LLMs? What did you learn?

- Have you tried local models seriously, or other providers beyond Anthropic?

- Is the response time issue a blocker for you too, or am I overstating it?

Drop a comment or find me on LinkedIn. Happy to go deeper on any of these.